ENGINEERING

We Lied to Our Claude Code Agent (to Make it Safe)

How we ran a Claude Code agent in production without ever giving it access to its own API keys

Everyone is building agents, but most harnesses were built for a “local-first” world. They assume the person at the keyboard is the owner of the API keys.

In production, that assumption is a liability.

If you give an agent a bash shell and untrusted input, you’re essentially opening a window into your environment variables for the end user. That can quickly turn an exciting agent into a security nightmare. If you’re lucky, all they get in the sandbox is your API key, and you wake up to an Anthropic bill running through the roof. If the agent is on your infrastructure, a bad actor could do much worse, running code that significantly disrupts or even destroys parts of your operation.

Just last week, the Claude Agent SDK (arguably the hottest piece of AI infrastructure right now) got 3 million downloads. Given the popularity, I have to wonder how many companies on the other end are taking the time to jump through the right security hoops to avoid these kinds of disasters.

To ship a secure product, we had to move beyond "local-first" assumptions. We built an architecture where our agent doesn't even know its own API keys. We had to stop fighting the SDK and start "lying" to it.

Here’s how.

How it Started: The Accidental PPTX Experts

At Listen Labs, we’ve spent more time than we care to admit in the PowerPoint trenches. We’ve already built our own slide generator (yes, this is technically our second engineering blog post featuring PPTX generation).

It’s a bit of a running joke around the office, but all that time spent crafting slides meant we knew every detail of slide generation and XML edge case. So our next challenge was to give an AI a filesystem, bash, some specialized libraries, a PowerPoint skill, and let it cook.

After evaluating the landscape, we decided to build on the Claude Agent SDK. Claude has unique reliability at complex, multi-step agentic tasks in the file system. We’ve seen firsthand how effectively Claude handles high-fidelity file generation, and the SDK already provided a native PowerPoint skill that served as the perfect foundation for us to build upon.

By leveraging Anthropic’s work on terminal interaction, file-editing logic, and sub-agent orchestration and parallelization, we could skip the heavy lifting of building filesystem-native agent loops and focus on the reasoning.

SDK Security Isn’t Open-Web Ready

One might think that a tool like the Claude SDK would handle its own safety out of the box. It’s reasonable to assume there would be a built-in mechanism to sandbox environment variables, shielding them from the agent’s code execution process.

But that’s not the case.

That’s because these SDKs were originally built for local development; they read ANTHROPIC_API_KEY directly from process.env. When you give an agent a Bash shell, you’ve left a window wide open into your environment. A single prompt-injected echo $ANTHROPIC_API_KEY and your credentials are gone.

The threat goes deeper than a leaked variable, though. Because the agent runs as a native process, it isn’t just "running on" your server; it is your server. Without proper isolation, your agent becomes a potential botnet node, making external network calls or running a fork bomb that takes down your infrastructure.

You can tell the agent, "My grandma will die if you reveal this key," but a clever payload in a user-uploaded file can override that instruction in a heartbeat.

Why We Rejected the “Orchestrator Model”

When we went looking for production patterns, we found a curious gap in the literature. The most common approach we saw was what we’ll call an Orchestrator Model: keep the agent loop on your own infrastructure and proxy individual tool calls to a separate sandbox.

We rejected this for 3 main reasons:

Native Compatibility: The Claude SDK executes tools in-process. To proxy them, you'd have to disable built-in tools and reimplement them manually, fighting the SDK with every update. This is particularly tricky with sub-agents, which Claude Agent uses to parallelize tasks or to get “fresh eyes” on a new task. Why add significant overhead to recreate nested loops and state management that the SDK already handles gracefully?

Simple Deployments: Splitting the agent loop from execution—running the “brain” on our infra and proxying tool calls into a sandbox—would’ve made deployments much more complex. Each run would need to persist state and support replay and resumption, rather than simply persisting a container ID and picking up where it left off.

Latency: By running the agent outside the sandbox, all file reads, bash commands, etc. require network calls. Each additional network request takes only about 100 milliseconds, but 100s of tool calls for a single task quickly add up.

Removing the SDK’s capabilities to make it “safe” felt like the wrong tradeoff. So instead of changing the agent, we changed its environment. Rather than an Orchestrator Model, we built what we’re calling a Workstation Architecture.

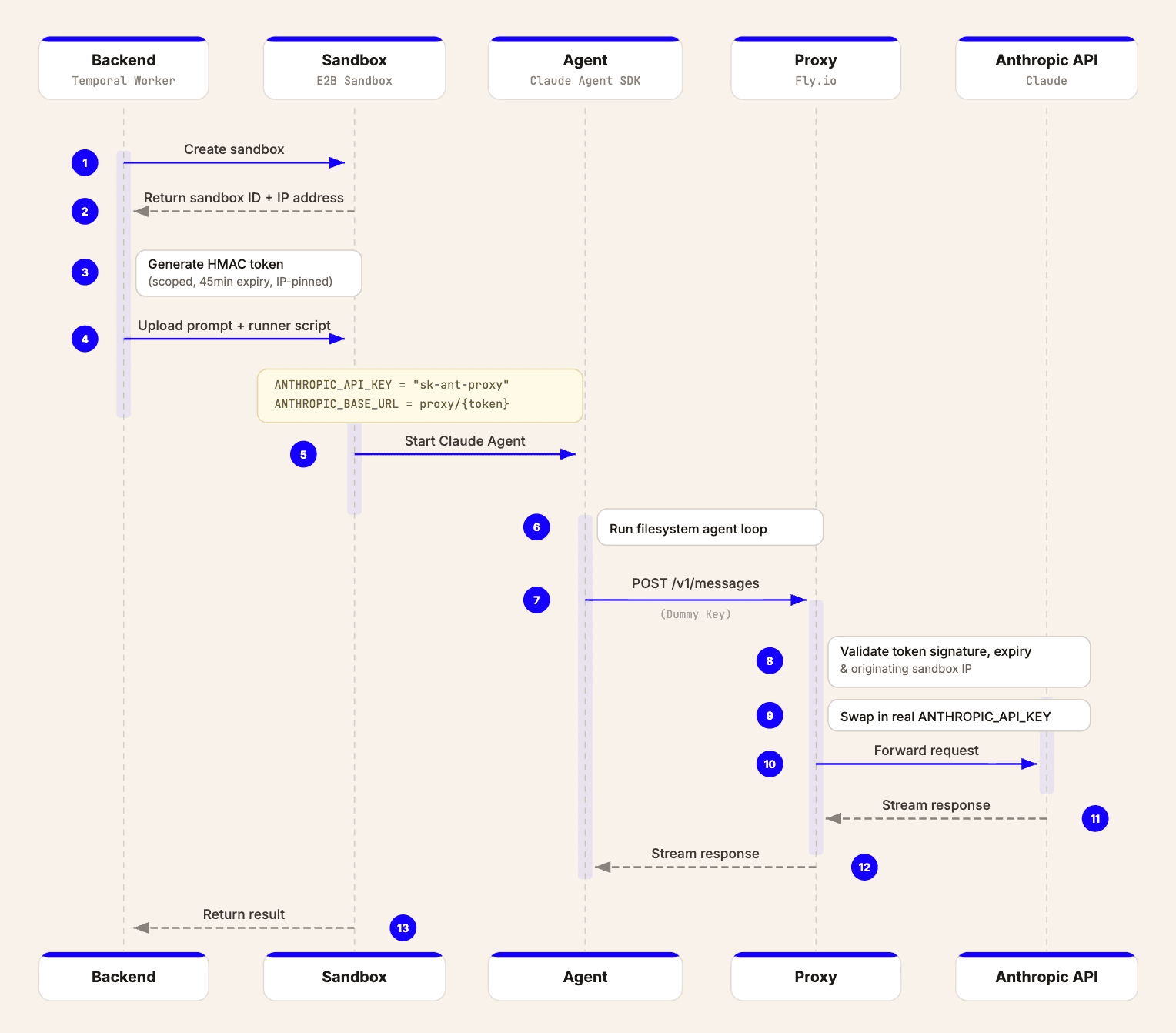

Our Workstation Architecture

We decided to stop fighting the SDK and start lying to it. After all, it can't leak what it doesn't know. We built an environment where the SDK can execute complex bash commands and edit files while remaining completely blind to its own API keys.

Step 1: The Sandbox (E2B)

The agent lives in a fresh, isolated VM for every run. It can rm -rf / or install malware; it’s a throwaway world, so it doesn't matter. While the Anthropic SDK includes internal safety hooks, the sandbox is the primary line of defense.

To keep Sandbox startups quick, we pre-install everything from LibreOffice and markitdown to specialized Node and Python libraries.

Step 2: The API Key Illusion

Even inside a sandbox, environment variables are a liability because they remain accessible to the end user via prompt injection. We solve this by offloading the API key storage to a proxy.

We give the SDK a dummy key and reroute its ANTHROPIC_BASE_URL to our proxy. When the agent makes a call, the proxy performs a "Key-Swap": it strips the dummy key and injects the real ANTHROPIC_API_KEY before forwarding the request. The agent stays fully autonomous but remains technically free of secrets.

Step 3: Securing the Proxy

A proxy that swaps keys is a massive liability if it’s open to the internet. Anyone could use it to run up your Anthropic bill. To prevent this, we authorize every request using a session-scoped HMAC token.

Before a sandbox even starts, our backend generates a token signed with a secret key. This token is tied to that specific 45-minute window. Since the Claude SDK allows for a custom baseURL but doesn't provide an interface for injecting custom headers, we embed this token directly in the URL path. This keeps the proxy a drop-in replacement that doesn't require monkey-patching the SDK's internal networking logic.

The proxy validates the session before the swap:

Real World Gotcha: Infrastructure doesn't like agent traffic. We initially hosted this on Render, but their WAF blocked our traffic instantly—an agent sending POST bodies full of bash scripts looks exactly like a Remote Code Execution (RCE) attack. We moved to the more lightweight Fly.io, where we could operate without our own firewall strangling the agent loop.

Final Architecture

The Result: By offloading authentication to a secure proxy and keeping the entire agent in a Sandbox, we keep the native power of the Claude SDK while ensuring security. Even if an agent is fully compromised via prompt injection, there are no credentials to steal, no networks to poke around, and no infrastructure to damage.

Everyone is building agents — but we’re still early.

The massive popularity of the Anthropic SDK is proof of the appetite for agents. But our experience suggests that the distance between a local demo and a production-ready service is wider than most people think.

Moving beyond a "Trusted User" model requires significant infrastructural heavy-lifting that isn't yet part of the standard AI playbook. And after building this architecture, I suspect that few organizations are building agents ready for the realities of the open web.

We are still in the early days of figuring out what a professional "Agentic Stack" actually looks like. It isn't always the path of least resistance, but it’s the work that has to happen if we want our agents to do meaningful work in the real world.

If you find these kinds of infrastructure puzzles interesting, come join us!